When a company wants to deploy useful AI internally, the same question quickly comes up on the table: should you choose RAG or fine-tuning?

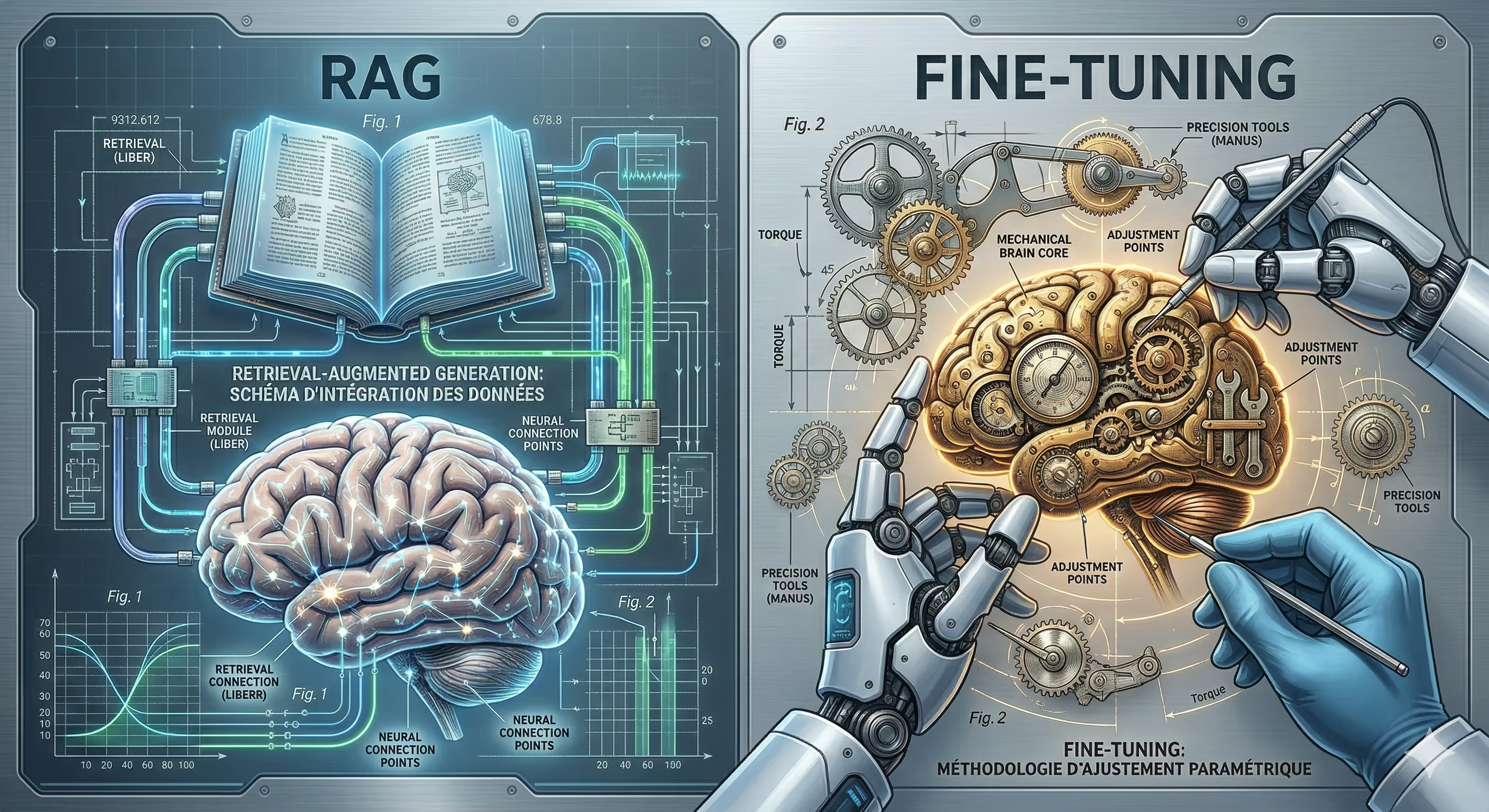

On paper, both approaches promise more relevant business AI. In practice, they do not meet the same need. The RAG allows a model to fetch information from an external knowledge base at the time of response. Fine tuning, on the other hand, changes the behavior of the model by adapting it to a specific data set or task.

For an SME, a B2B SaaS or a business team, the real subject is therefore not “which technology is the most advanced?” The real subject is simpler: what solution makes it possible to obtain reliable, up-to-date, profitable and exploitable AI in a real context?

In this article, we will clarify the difference between RAG and fine-tuning, see the use cases where each approach makes sense, and above all understand why the best response in business is almost never purely theoretical.

Why does this question come up in almost every AI project

Since LLMs entered business tools, many businesses want to create an internal AI assistant, a document search engine, augmented customer support, or a co-pilot for their teams.

The problem is that a general-purpose model already knows a lot, but they don't know your business. He does not necessarily know your internal documentation, your processes, your offers, your business rules, or the latest version of a commercial document or a quality database. And that's where the need for personalization comes in. An LLM knows how to generate. To become a true business AI, it must also be framed, connected to the right data and governed correctly.

That's why the RAG vs fine-tuning comparison has become central. It affects enterprise AI reliability, data security, the cost of production, and the speed with which the system can be evolved.

What exactly is RAG?

RAG stands for Retrieval-Augmented Generation. Clearly, we keep a basic model, but we give it access to external sources when responding. Before generating its response, the system will search for the most useful information in a corpus of documents, a vector database, internal documentation, a help center or a knowledge base. Then, it builds its response based on these elements.

It is an approach very suitable for companies that want to connect an AI to living content: contracts, procedures, product sheets, support documentation, reports, FAQs, HR database, technical documentation or commercial base.

In other words, the RAG is not trying to teach all of your data to the model. Above all, he teaches her to look for them at the right time.

It is often the first relevant enterprise AI architecture when you want to create a reliable AI assistant without starting from scratch.

What is fine tuning exactly?

Fine-tuning consists in using a pre-trained model to adapt it to a more specific need. Here, we don't just give it context at the time of the answer. We actually modify the model so that it responds in a certain way, with a tone, format, behavior, or skill that is better aligned with the task at hand.

This is useful when the real need is less about access to up-to-date information than about the behavior of the model itself.

For example, a business might want a model that:

always responds according to a precise structure,

classify requests according to a business taxonomy,

reformulate with a constant level of language,

generates outputs in a rigorous format,

or performs a specialized task better than a general-purpose model.

AI fine-tuning is therefore a logic of profound personalization. You don't just connect the model to business data. We want to make it better on a targeted mission.

RAG or fine-tuning: the difference that really matters

The difference between RAG and fine-tuning can be summed up simply.

With the RAG, you improve access to knowledge.

With fine-tuning, you improve the behavior of the model.

It is the most useful distinction for a decision maker.

If your problem is: “our AI must respond with the right information, up to date, from our internal documents”, you are very often in an RAG use case.

If your problem is: “our AI needs to learn a specific way of responding, classifying, writing or structuring”, you are approaching fine-tuning.

The AWS and Microsoft sources also point in this direction: the RAG is adapted to the retrieval of business information and the rapid integration of recent documents, while fine-tuning becomes useful when you want to permanently change the behavior of the model.

Why is RAG so appealing to businesses

The corporate RAG ticks several very concrete boxes.

First, it allows you to work with business data that changes often. A documentary database, a catalog, a quality procedure or a support database can evolve without having to retrain the model with each modification. AWS rightly points out that the RAG can quickly integrate new documents without fine-tuning.

Second, it helps to better govern the response. When AI relies on identified sources, it becomes easier to trace what it used, to limit the scope of responses, and to reduce some risks of AI hallucinations. To reduce is not to remove. A poorly designed RAG system can always make a mistake, misretrieve a document, or misinterpret a source. But in a corporate setting, it provides a much higher level of control than a simple prompt plugged into a generic LLM.

Finally, the RAG cost is often easier to define at the start than a complete fine-tuning. You mainly invest in data preparation, indexing, vector database, retrieval rules and orchestration. It is already a real project, but it is often faster to launch for the first time internal AI assistant.

Why does fine-tuning keep a real place

Fine tuning did not disappear with the rise of RAG. It's just more relevant in other situations.

It becomes interesting when you want to get a strong consistency on a type of output. For example in business workflows where the expected format is strict, in classification tasks, structured extraction, moderation, specialized analysis or highly supervised generation tasks.

Microsoft reiterates an essential point: the prompt and the RAG can provide context, but they do not really change the behavior of the model. If your goal is to align this behavior in a stable way, fine-tuning makes sense.

AI fine-tuning can also be useful when the company has a sufficient volume of high-quality, well-annotated examples, and a fairly stable use case to justify this investment.

On the other hand, many teams overestimate its interest too early. They want to “train their AI on their documents”, while their real need is simply to make these documents searchable properly by a RAG system. This is a common mistake in enterprise AI projects.

The most common bad reflex: wanting to fine-tune to inject knowledge

This is often where projects get complicated.

A company says to itself, “We have a lot of internal documents, so we're going to fine-tune the model on it.” In reality, it's not always the right approach. If content changes, if business knowledge evolves, if documents are numerous and heterogeneous, fine-tuner quickly becomes cumbersome to maintain.

The RAG was precisely popularized for this reason: it makes it possible to anchor the answers on external and updatable sources, rather than trying to make all the knowledge carried by the weight of the model. IBM and AWS clearly present this logic.

In the real life of an SME, this is very often the right first step.

Which AI solution for business according to the use case?

The right approach depends less on the buzzword and more on the business problem.

Let's take a few concrete situations.

A support team wants an assistant who can respond based on a base of help, technical articles, and internal procedures. Here, corporate RAG is often the best choice. The main need is access to reliable and up-to-date information.

A sales department wants an assistant who helps to reformulate proposals, summarize appointments and quickly find arguments from internal documents. Again, RAG often has the advantage, provided that the content is well structured.

A business team wants an engine that automatically classifies files according to a very specific logic, with outputs in an imposed format. Here, fine-tuning can become relevant, especially if you have a history of validated examples.

A SaaS product wants to embed an AI that adopts a tone, structure and very stable responses within a limited scope. In this case, a hybrid approach may be the best option: RAG for fresh acquaintance, fine-tuning for behavior.

In fact, this is the reality of many serious projects. We often oppose RAG vs fine-tuning as if we had to choose a side. In practice, the two can complement each other.

And reliability in all of this?

That is the heart of the matter.

A reliable business AI is not just an AI that “responds well.” It is an AI that responds accurately, in the right scope, with an appropriate level of trust, on controlled sources, and with consistent behavior over time.

RAG improves reliability when the main problem is access to the right information. It can also make the response more auditable if the architecture includes the right sources, the right document division and the right safeguards. But a poorly plugged RAG into dirty data will produce dirty answers.

Fine tuning improves reliability when the main problem is behavioral consistency. It can make the model more disciplined about a given task. On the other hand, it does not replace an own data strategy, nor a clear governance of sources.

In other words, enterprise AI reliability doesn't just depend on the choice between RAG or fine-tuning. It also depends on documentary quality, architecture, conversational design, access rights, monitoring and business tests.

That's why a well-run AI project rarely looks like a simple model integration. It's more like a product project.

The subject that is often underestimated: data quality

A lot of projects fail here.

We debate for weeks on the best LLM company, on the RAG cost or on the fine-tuning cost, while the real weakness comes from corporate data. Outdated documents, duplicates, bad versions, contradictory information, lack of structure, poorly managed access rights.

An internal AI assistant doesn't magically become reliable. It reflects the level of clarity of your information system.

That's also why the most effective projects don't always start with tech. They start by framing the use case, sources of truth, user roles, and the acceptable level of risk.

RAG cost vs fine-tuning cost: what to watch for real

The price debate is often misstated.

The RAG cost is not just a model cost. This includes content preparation, indexing, vector database, orchestration, security, testing, and maintenance of the document pipeline.

The fine-tuning cost is not just a training cost. It is also necessary to integrate the collection and quality of examples, data cleaning, data cleaning, iterations, evaluation, deployment, and sometimes the need to repeat cycles when the business context changes.

In many cases, RAG is faster to make profitable for a company that wants to connect an AI to its business knowledge. AWS highlights it for question-and-answer systems based on custom documents.

But watch out for the “RAG = always cheaper” shortcut. On a large scale, a poorly designed RAG architecture can also be expensive in terms of tokens, latency, and maintenance. The right choice therefore depends on the volume of use, the documentary complexity and the level of business requirements.

AI data security: the criterion that often changes the decision

As soon as we talk about business AI, AI data security becomes a decisive topic.

Who can question what? What documents are available? Does the assistant respect access rights? Can we compartmentalize the answers according to the teams? Are the sources hosted properly? Are the logs under control?

Again, the choice between RAG and fine-tuning is not enough. A secure project depends above all on the overall architecture.

RAG requires real discipline on sources, permissions, indexing and context retrieval. Fine tuning, on the other hand, raises other questions, in particular about the data used to adapt the model and their governance.

For an SME, the right level of security is not necessarily that of a banking group. But it needs to be thought out from the start. Otherwise, the tool is quickly blocked by the IT department, legal teams or simply by the fear of doing the wrong thing.

The best choice for an SME today

In the majority of business AI projects that we see emerging, the best starting point is not fine-tuning. It is often a well-designed RAG, based on a specific use case, connected to its own sources, with clear business rules and real user tests. AWS recommendations and IBM definitions go in this direction for the needs of business knowledge and evolving documents.

Why? Because most businesses first want an AI that can find the right information before they want an AI with highly personalized behavior.

Fine tuning becomes very interesting afterwards, when the need is more mature, more stable, better instrumentalized, and when there is enough quality data to justify this additional layer.

In plain language:

if you are looking for an AI connected to your business content, think RAG first;

if you're looking for an AI that needs to learn a specific way to respond or perform a task, look at fine-tuning;

If your project becomes strategic, prepare for a hybrid architecture.

What a lot of businesses should do before making a decision

Before choosing an enterprise AI architecture, a few simple questions need to be answered.

Is the need for knowledge or behavior?

Does the content change often?

Are the documents clean, usable, and prioritized?

Should AI cite its sources, respect access rights, or remain within a very closed business perimeter?

Are there high-quality examples available to train or adapt a model?

What is the true cost of a response error?

At this point, the subject is no longer “RAG or fine-tuning” in the theoretical sense. The subject becomes “what AI solution for business in our real context?”

And that's exactly where serious framing saves time, budget, and a lot of unnecessary back and forth.

The real right approach for reliable and useful AI

RAG or fine-tuning? The right answer is seldom ideological.

RAG is often the best starting point for enterprise AI that needs to access up-to-date business data, reduce AI hallucinations, and remain actionable without a heavy retraining cycle. Fine tuning becomes valuable when it is necessary to finely model behavior, response structure or performance on a very specific task.

The most important thing, after all, is not choosing the buzzword. It's about building an AI architecture that lasts over time, that respects business constraints, and that brings real value to teams.

It is also where a lot of projects take place. Between a prototype that impresses on demo and a reliable solution in production, there is all the work of framing, structuring, connecting to data and implementing it. At Scroll, that's exactly what we're helping to do, with AI projects, automations, and business apps designed to be useful, robust, and truly adopted.

The RAG allows an AI to search for information in documents or knowledge bases when responding. Fine tuning involves adapting the behavior of the model for a specific task, tone, or format. Clearly, RAG improves access to business data, while fine-tuning improves how AI responds.

It all depends on the need. For a business AI that needs to respond based on internal documents, RAG is often more reliable because it relies on up-to-date sources. Fine tuning becomes relevant when the objective is to obtain behavior that is more stable, more accurate or more in accordance with a specific business use.

RAG is preferred when a company wants to connect an AI to its internal documentation, knowledge base, customer support or business procedures. This is often the best option if business data changes regularly and if reliable answers depend on access to up-to-date information.

Fine tuning becomes useful when a business wants to customize a model for a specific task, such as classifying, extracting information, writing in a strict format, or applying a specific tone of voice. It is especially relevant when you have a quality data set and a well-defined use case.

Yes, it is possible to combine RAG and fine-tuning in the same AI architecture. RAG makes it possible to exploit up-to-date business data, while fine-tuning makes it possible to improve the behavior of the model. This hybrid approach is often the most relevant for building enterprise AI that is reliable, useful, and well-adapted to business needs.

.svg)

.svg)

.svg)

.svg)